Difference between revisions of "Example Stereo Uncalibrated"

From BoofCV

Jump to navigationJump to searchm |

m |

||

| (5 intermediate revisions by the same user not shown) | |||

| Line 8: | Line 8: | ||

Example File: | Example File: | ||

* [https://github.com/lessthanoptimal/BoofCV/blob/v0. | * [https://github.com/lessthanoptimal/BoofCV/blob/v0.40/examples/src/main/java/boofcv/examples/stereo/ExampleStereoUncalibrated.java ExampleStereoUncalibrated.java] | ||

Concepts: | Concepts: | ||

| Line 19: | Line 19: | ||

Videos | Videos | ||

* | * [https://www.youtube.com/watch?v=O_yAdsT8d84 Theoretical Explanation] | ||

* | * [https://www.youtube.com/watch?v=GDI2y8JwLNs&t=115s Demonstration] | ||

Related Tutorials/Example Code: | Related Tutorials/Example Code: | ||

| Line 43: | Line 43: | ||

* | * | ||

* <p> | * <p> | ||

* | * A summary of the algorithm is provided below. There are many ways in which it can be improved upon, but would | ||

* | * increase the complexity. There is also no agreed upon best solution found in the literature and the solution | ||

* | * presented below is "impractical" because of its sensitivity to tuning parameters. If you got a solution | ||

* | * which does a better job let us know! | ||

* </p> | * </p> | ||

* | * | ||

| Line 68: | Line 68: | ||

public class ExampleStereoUncalibrated { | public class ExampleStereoUncalibrated { | ||

public static void main( String | public static void main( String[] args ) { | ||

// Solution below is unstable. Turning concurrency off so that it always produces a valid solution | // Solution below is unstable. Turning concurrency off so that it always produces a valid solution | ||

// The two view case is very challenging and I've not seen a stable algorithm yet | // The two view case is very challenging and I've not seen a stable algorithm yet | ||

| Line 74: | Line 74: | ||

// Successful | // Successful | ||

String name = " | String name = "bobcats_"; | ||

// String name = " | // String name = "mono_wall_"; | ||

// String name = "minecraft_cave1_"; | // String name = "minecraft_cave1_"; | ||

// String name = "chicken_"; | // String name = "chicken_"; | ||

| Line 91: | Line 91: | ||

// String name = "turkey_"; | // String name = "turkey_"; | ||

BufferedImage buff01 = UtilImageIO. | BufferedImage buff01 = UtilImageIO.loadImageNotNull(UtilIO.pathExample("triple/" + name + "01.jpg")); | ||

BufferedImage buff02 = UtilImageIO. | BufferedImage buff02 = UtilImageIO.loadImageNotNull(UtilIO.pathExample("triple/" + name + "02.jpg")); | ||

Planar<GrayU8> color01 = ConvertBufferedImage.convertFrom(buff01,true, ImageType.pl(3,GrayU8.class)); | Planar<GrayU8> color01 = ConvertBufferedImage.convertFrom(buff01, true, ImageType.pl(3, GrayU8.class)); | ||

Planar<GrayU8> color02 = ConvertBufferedImage.convertFrom(buff02,true, ImageType.pl(3,GrayU8.class)); | Planar<GrayU8> color02 = ConvertBufferedImage.convertFrom(buff02, true, ImageType.pl(3, GrayU8.class)); | ||

GrayU8 image01 = ConvertImage.average(color01,null); | GrayU8 image01 = ConvertImage.average(color01, null); | ||

GrayU8 image02 = ConvertImage.average(color02,null); | GrayU8 image02 = ConvertImage.average(color02, null); | ||

// Find a set of point feature matches | // Find a set of point feature matches | ||

List<AssociatedPair> matches = | List<AssociatedPair> matches = ExampleComputeFundamentalMatrix.computeMatches(buff01, buff02); | ||

// Prune matches using the epipolar constraint. use a low threshold to prune more false matches | // Prune matches using the epipolar constraint. use a low threshold to prune more false matches | ||

var inliers = new ArrayList<AssociatedPair>(); | |||

DMatrixRMaj F = | DMatrixRMaj F = ExampleComputeFundamentalMatrix.robustFundamental(matches, inliers, 0.1); | ||

// Perform self calibration using the projective view extracted from F | // Perform self calibration using the projective view extracted from F | ||

| Line 118: | Line 118: | ||

// Compute a transform from projective to metric by assuming we know the camera's calibration | // Compute a transform from projective to metric by assuming we know the camera's calibration | ||

var estimateV = new EstimatePlaneAtInfinityGivenK(); | |||

estimateV.setCamera1(fx,fy,0,cx,cy); | estimateV.setCamera1(fx, fy, 0, cx, cy); | ||

estimateV.setCamera2(fx,fy,0,cx,cy); | estimateV.setCamera2(fx, fy, 0, cx, cy); | ||

var v = new Vector3D_F64(); // plane at infinity | |||

if( !estimateV.estimatePlaneAtInfinity(P2,v)) | if (!estimateV.estimatePlaneAtInfinity(P2, v)) | ||

throw new RuntimeException("Failed!"); | throw new RuntimeException("Failed!"); | ||

DMatrixRMaj K = PerspectiveOps.pinholeToMatrix(fx,fy,0,cx,cy); | DMatrixRMaj K = PerspectiveOps.pinholeToMatrix(fx, fy, 0, cx, cy); | ||

DMatrixRMaj H = MultiViewOps.createProjectiveToMetric(K,v.x,v.y,v.z,1,null); | DMatrixRMaj H = MultiViewOps.createProjectiveToMetric(K, v.x, v.y, v.z, 1, null); | ||

var P2m = new DMatrixRMaj(3, 4); | |||

CommonOps_DDRM.mult(P2,H,P2m); | CommonOps_DDRM.mult(P2, H, P2m); | ||

// Decompose and get the initial estimate for translation | // Decompose and get the initial estimate for translation | ||

var tmp = new DMatrixRMaj(3, 3); | |||

var view1_to_view2 = new Se3_F64(); | |||

MultiViewOps.decomposeMetricCamera(P2m,tmp,view1_to_view2); | MultiViewOps.decomposeMetricCamera(P2m, tmp, view1_to_view2); | ||

//------------------------- Setting up bundle adjustment | //------------------------- Setting up bundle adjustment | ||

| Line 141: | Line 141: | ||

// Construct bundle adjustment data structure | // Construct bundle adjustment data structure | ||

var structure = new SceneStructureMetric(false); | |||

var observations = new SceneObservations(); | |||

// We will assume that the camera has fixed intrinsic parameters | // We will assume that the camera has fixed intrinsic parameters | ||

structure.initialize(1,2,inliers.size()); | structure.initialize(1, 2, inliers.size()); | ||

observations.initialize(2); | |||

var bp = new BundlePinholeSimplified(); | |||

bp.f = fx; | bp.f = fx; | ||

structure.setCamera(0,false,bp); | structure.setCamera(0, false, bp); | ||

// The first view is the world coordinate system | // The first view is the world coordinate system | ||

structure.setView(0,true,new Se3_F64() | structure.setView(0, 0, true, new Se3_F64()); | ||

// Second view was estimated previously | // Second view was estimated previously | ||

structure.setView(1,false,view1_to_view2 | structure.setView(1, 0, false, view1_to_view2); | ||

for (int i = 0; i < inliers.size(); i++) { | for (int i = 0; i < inliers.size(); i++) { | ||

| Line 162: | Line 162: | ||

// substract out the camera center from points. This allows a simple camera model to be used and | // substract out the camera center from points. This allows a simple camera model to be used and | ||

// errors in the this coordinate tend to be non-fatal | // errors in the this coordinate tend to be non-fatal | ||

observations.getView(0).add(i,(float)(t.p1.x-cx),(float)(t.p1.y-cy)); | observations.getView(0).add(i, (float)(t.p1.x - cx), (float)(t.p1.y - cy)); | ||

observations.getView(1).add(i,(float)(t.p2.x-cx),(float)(t.p2.y-cy)); | observations.getView(1).add(i, (float)(t.p2.x - cx), (float)(t.p2.y - cy)); | ||

// each point is visible in both of the views | // each point is visible in both of the views | ||

structure.connectPointToView(i,0); | structure.connectPointToView(i, 0); | ||

structure.connectPointToView(i,1); | structure.connectPointToView(i, 1); | ||

} | } | ||

// initial location of points is found through triangulation | // initial location of points is found through triangulation | ||

MultiViewOps.triangulatePoints(structure,observations); | MultiViewOps.triangulatePoints(structure, observations); | ||

//------------------ Running Bundle Adjustment | //------------------ Running Bundle Adjustment | ||

System.out.println("Performing bundle adjustment"); | System.out.println("Performing bundle adjustment"); | ||

var configLM = new ConfigLevenbergMarquardt(); | |||

configLM.dampeningInitial = 1e-3; | configLM.dampeningInitial = 1e-3; | ||

configLM.hessianScaling = false; | configLM.hessianScaling = false; | ||

var configSBA = new ConfigBundleAdjustment(); | |||

configSBA.configOptimizer = configLM; | configSBA.configOptimizer = configLM; | ||

| Line 184: | Line 184: | ||

BundleAdjustment<SceneStructureMetric> bundleAdjustment = FactoryMultiView.bundleSparseMetric(configSBA); | BundleAdjustment<SceneStructureMetric> bundleAdjustment = FactoryMultiView.bundleSparseMetric(configSBA); | ||

// prints out useful debugging information that lets you know how well it's converging | // prints out useful debugging information that lets you know how well it's converging | ||

bundleAdjustment.setVerbose(System.out, | bundleAdjustment.setVerbose(System.out, null); | ||

// Specifies convergence criteria | // Specifies convergence criteria | ||

bundleAdjustment.configure(1e-6, 1e-6, 100); | bundleAdjustment.configure(1e-6, 1e-6, 100); | ||

// Scaling improve accuracy of numerical calculations | // Scaling improve accuracy of numerical calculations | ||

var bundleScale = new ScaleSceneStructure(); | |||

bundleScale.applyScale(structure,observations); | bundleScale.applyScale(structure, observations); | ||

bundleAdjustment.setParameters(structure,observations); | bundleAdjustment.setParameters(structure, observations); | ||

bundleAdjustment.optimize(structure); | bundleAdjustment.optimize(structure); | ||

// Sometimes pruning outliers help improve the solution. In the stereo case the errors are likely | // Sometimes pruning outliers help improve the solution. In the stereo case the errors are likely | ||

// to already fatal | // to already fatal | ||

var pruner = new PruneStructureFromSceneMetric(structure, observations); | |||

pruner.pruneObservationsByErrorRank(0.85); | pruner.pruneObservationsByErrorRank(0.85); | ||

pruner.prunePoints(1); | pruner.prunePoints(1); | ||

bundleAdjustment.setParameters(structure,observations); | bundleAdjustment.setParameters(structure, observations); | ||

bundleAdjustment.optimize(structure); | bundleAdjustment.optimize(structure); | ||

bundleScale.undoScale(structure,observations); | bundleScale.undoScale(structure, observations); | ||

System.out.println("\nCamera"); | System.out.println("\nCamera"); | ||

for (int i = 0; i < structure.cameras. | for (int i = 0; i < structure.cameras.size; i++) { | ||

System.out.println(structure.cameras[i].getModel().toString()); | System.out.println(structure.cameras.data[i].getModel().toString()); | ||

} | } | ||

System.out.println("\n\nworldToView"); | System.out.println("\n\nworldToView"); | ||

for (int i = 0; i < structure.views. | for (int i = 0; i < structure.views.size; i++) { | ||

System.out.println(structure. | System.out.println(structure.getParentToView(i).toString()); | ||

} | } | ||

// display the inlier matches found using the robust estimator | // display the inlier matches found using the robust estimator | ||

System.out.println("\n\nComputing Stereo Disparity"); | System.out.println("\n\nComputing Stereo Disparity"); | ||

BundlePinholeSimplified cp = structure.getCameras() | BundlePinholeSimplified cp = structure.getCameras().get(0).getModel(); | ||

var intrinsic = new CameraPinholeBrown(); | |||

intrinsic.fsetK(cp.f,cp.f,0,cx,cy,width,height); | intrinsic.fsetK(cp.f, cp.f, 0, cx, cy, width, height); | ||

intrinsic.fsetRadial(cp.k1,cp.k2); | intrinsic.fsetRadial(cp.k1, cp.k2); | ||

Se3_F64 leftToRight = structure. | Se3_F64 leftToRight = structure.getParentToView(1); | ||

computeStereoCloud(image01,image02,color01,color02,intrinsic,intrinsic,leftToRight,0,250); | computeStereoCloud(image01, image02, color01, color02, intrinsic, intrinsic, leftToRight, 0, 250); | ||

} | } | ||

} | } | ||

</syntaxhighlight> | </syntaxhighlight> | ||

Latest revision as of 16:34, 17 January 2022

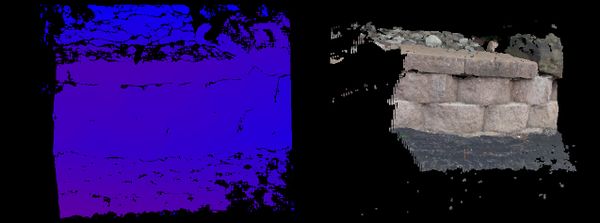

Given two views from an unknown camera compute a dense 3D point cloud. This is a very challenging problem and many of the steps are inherently unstable. This example code only converges to a valid solution about 40% of the time when presented with two well textured input images with good geometry! When it does work it can work almost well as the calibrated cases! If three views are available the problem becomes much more stable.

See code comments below for a summary of all the processing steps involved.

Example File:

Concepts:

- Self calibration / Automatic Calibration

- Projective Geometry

- Metric Geometry

- Point feature association

- Rectification

- Dense stereo processing

Videos

Related Tutorials/Example Code:

- Three View Stereo Uncalibrated

- Stereo Single Calibrated Camera

- Disparity from Calibrated Stereo Camera

- Bundle Adjustment

- Calibrated Stereo Rectification Example

- Camera Calibration Tutorial

Example Code

/**

* <p>

* In this example a stereo point cloud is computed from two uncalibrated stereo images. The uncalibrated problem

* is much more difficult from the calibrated or semi-calibrated problem and the solution below will often fail.

* The root cause of this difficulty is that it is impossible to remove all false associations given two views,

* even if the true fundamental matrix is provided! For that reason it is recommended that you use a minimum of

* three views with uncalibrated observations.

* </p>

*

* <p>

* A summary of the algorithm is provided below. There are many ways in which it can be improved upon, but would

* increase the complexity. There is also no agreed upon best solution found in the literature and the solution

* presented below is "impractical" because of its sensitivity to tuning parameters. If you got a solution

* which does a better job let us know!

* </p>

*

* <ol>

* <li>Feature association</li>

* <li>RANSAC to estimate Fundamental matrix</li>

* <li>Guess and check focal length and compute projective to metric homography</li>

* <li>Upgrade to metric geometry</li>

* <li>Set up bundle adjustment and triangulate 3D points</li>

* <li>Run bundle adjustment</li>

* <li>Prune outlier points</li>

* <li>Run bundle adjustment again</li>

* <li>Compute stereo rectification</li>

* <li>Rectify images</li>

* <li>Compute stereo disparity</li>

* <li>Compute and display point cloud</li>

* </ol>

*

* @author Peter Abeles

*/

public class ExampleStereoUncalibrated {

public static void main( String[] args ) {

// Solution below is unstable. Turning concurrency off so that it always produces a valid solution

// The two view case is very challenging and I've not seen a stable algorithm yet

BoofConcurrency.USE_CONCURRENT = false;

// Successful

String name = "bobcats_";

// String name = "mono_wall_";

// String name = "minecraft_cave1_";

// String name = "chicken_";

// String name = "books_";

// Successful Failures

// String name = "triflowers_";

// Failures

// String name = "rock_leaves_";

// String name = "minecraft_distant_";

// String name = "rockview_";

// String name = "pebbles_";

// String name = "skull_";

// String name = "turkey_";

BufferedImage buff01 = UtilImageIO.loadImageNotNull(UtilIO.pathExample("triple/" + name + "01.jpg"));

BufferedImage buff02 = UtilImageIO.loadImageNotNull(UtilIO.pathExample("triple/" + name + "02.jpg"));

Planar<GrayU8> color01 = ConvertBufferedImage.convertFrom(buff01, true, ImageType.pl(3, GrayU8.class));

Planar<GrayU8> color02 = ConvertBufferedImage.convertFrom(buff02, true, ImageType.pl(3, GrayU8.class));

GrayU8 image01 = ConvertImage.average(color01, null);

GrayU8 image02 = ConvertImage.average(color02, null);

// Find a set of point feature matches

List<AssociatedPair> matches = ExampleComputeFundamentalMatrix.computeMatches(buff01, buff02);

// Prune matches using the epipolar constraint. use a low threshold to prune more false matches

var inliers = new ArrayList<AssociatedPair>();

DMatrixRMaj F = ExampleComputeFundamentalMatrix.robustFundamental(matches, inliers, 0.1);

// Perform self calibration using the projective view extracted from F

// Note that P1 = [I|0]

System.out.println("Self calibration");

DMatrixRMaj P2 = MultiViewOps.fundamentalToProjective(F);

// Take a crude guess at the intrinsic parameters. Bundle adjustment will fix this later.

int width = buff01.getWidth(), height = buff02.getHeight();

double fx = width/2; double fy = fx;

double cx = width/2; double cy = height/2;

// Compute a transform from projective to metric by assuming we know the camera's calibration

var estimateV = new EstimatePlaneAtInfinityGivenK();

estimateV.setCamera1(fx, fy, 0, cx, cy);

estimateV.setCamera2(fx, fy, 0, cx, cy);

var v = new Vector3D_F64(); // plane at infinity

if (!estimateV.estimatePlaneAtInfinity(P2, v))

throw new RuntimeException("Failed!");

DMatrixRMaj K = PerspectiveOps.pinholeToMatrix(fx, fy, 0, cx, cy);

DMatrixRMaj H = MultiViewOps.createProjectiveToMetric(K, v.x, v.y, v.z, 1, null);

var P2m = new DMatrixRMaj(3, 4);

CommonOps_DDRM.mult(P2, H, P2m);

// Decompose and get the initial estimate for translation

var tmp = new DMatrixRMaj(3, 3);

var view1_to_view2 = new Se3_F64();

MultiViewOps.decomposeMetricCamera(P2m, tmp, view1_to_view2);

//------------------------- Setting up bundle adjustment

// bundle adjustment will provide a more refined and accurate estimate of these parameters

System.out.println("Configuring bundle adjustment");

// Construct bundle adjustment data structure

var structure = new SceneStructureMetric(false);

var observations = new SceneObservations();

// We will assume that the camera has fixed intrinsic parameters

structure.initialize(1, 2, inliers.size());

observations.initialize(2);

var bp = new BundlePinholeSimplified();

bp.f = fx;

structure.setCamera(0, false, bp);

// The first view is the world coordinate system

structure.setView(0, 0, true, new Se3_F64());

// Second view was estimated previously

structure.setView(1, 0, false, view1_to_view2);

for (int i = 0; i < inliers.size(); i++) {

AssociatedPair t = inliers.get(i);

// substract out the camera center from points. This allows a simple camera model to be used and

// errors in the this coordinate tend to be non-fatal

observations.getView(0).add(i, (float)(t.p1.x - cx), (float)(t.p1.y - cy));

observations.getView(1).add(i, (float)(t.p2.x - cx), (float)(t.p2.y - cy));

// each point is visible in both of the views

structure.connectPointToView(i, 0);

structure.connectPointToView(i, 1);

}

// initial location of points is found through triangulation

MultiViewOps.triangulatePoints(structure, observations);

//------------------ Running Bundle Adjustment

System.out.println("Performing bundle adjustment");

var configLM = new ConfigLevenbergMarquardt();

configLM.dampeningInitial = 1e-3;

configLM.hessianScaling = false;

var configSBA = new ConfigBundleAdjustment();

configSBA.configOptimizer = configLM;

// Create and configure the bundle adjustment solver

BundleAdjustment<SceneStructureMetric> bundleAdjustment = FactoryMultiView.bundleSparseMetric(configSBA);

// prints out useful debugging information that lets you know how well it's converging

bundleAdjustment.setVerbose(System.out, null);

// Specifies convergence criteria

bundleAdjustment.configure(1e-6, 1e-6, 100);

// Scaling improve accuracy of numerical calculations

var bundleScale = new ScaleSceneStructure();

bundleScale.applyScale(structure, observations);

bundleAdjustment.setParameters(structure, observations);

bundleAdjustment.optimize(structure);

// Sometimes pruning outliers help improve the solution. In the stereo case the errors are likely

// to already fatal

var pruner = new PruneStructureFromSceneMetric(structure, observations);

pruner.pruneObservationsByErrorRank(0.85);

pruner.prunePoints(1);

bundleAdjustment.setParameters(structure, observations);

bundleAdjustment.optimize(structure);

bundleScale.undoScale(structure, observations);

System.out.println("\nCamera");

for (int i = 0; i < structure.cameras.size; i++) {

System.out.println(structure.cameras.data[i].getModel().toString());

}

System.out.println("\n\nworldToView");

for (int i = 0; i < structure.views.size; i++) {

System.out.println(structure.getParentToView(i).toString());

}

// display the inlier matches found using the robust estimator

System.out.println("\n\nComputing Stereo Disparity");

BundlePinholeSimplified cp = structure.getCameras().get(0).getModel();

var intrinsic = new CameraPinholeBrown();

intrinsic.fsetK(cp.f, cp.f, 0, cx, cy, width, height);

intrinsic.fsetRadial(cp.k1, cp.k2);

Se3_F64 leftToRight = structure.getParentToView(1);

computeStereoCloud(image01, image02, color01, color02, intrinsic, intrinsic, leftToRight, 0, 250);

}

}