Tutorial Camera Calibration

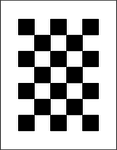

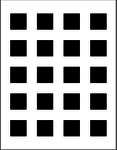

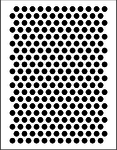

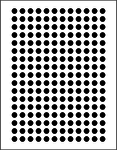

BoofCV contains a toolbox and API for camera calibration with plenty of source code examples and pre-build GUI applications. Camera calibration (also known as camera resectioning) is the process of estimating intrinsic and/or extrinsic parameters. Intrinsic parameters deal with the camera's internal characteristics, such as, its focal length, skew, distortion, and image center. Extrinsic parameters describe its position and orientation in the world. Knowing intrinsic parameters is an essential first step for 3D computer vision, as it allows you to estimate the scene's structure in Euclidean space and removes lens distortion, which degrades accuracy. BoofCV provides fully automated calibration for several planar target types (see pictures above) that can be easily printed on standard sized paper.

Calibration in BoofCV is heavily influenced by Zhengyou Zhang's 1999 paper, "Flexible Camera Calibration By Viewing a Plane From Unknown Orientations". See his webpage below for the paper and theoretical information on camera calibration. A link is also provided to a popular matlab calibration toolbox.

It is possible to either manually collect images and process them or to use a fully automated assisted calibration. Both approaches are described below.

References:

- Zhang's Camera Calibration

- CalTech's Matlab Calibration Toolbox

- R. Hartley, and A. Zisserman, "Multiple View Geometry in Computer Vision"

Papers and How to Cite

If you use BoofCV in academic work please cite it so others can find out about it!

- Citing BoofCV

- Chessboard X-Corner Detector

- Assisted Calibration (to be written)

Shameless Promotion

|

Coordinate Systems

Please refer to Coordinate System page and understand how BoofCV defines its coordinate system.

The Calibration Process

In this section, the camera calibration procedure is broken down into steps and explained. Almost identical steps are followed for calibration a single camera or a stereo camera system. First a quick overview:

- Select a pattern, download (or create your own), and print

- Mount the pattern onto a rigid flat surface

- Take many pictures of the target at different orientations and distances

- Download pictures to compute and select ones that are in focus

- Use provided examples to automatically detect calibration target and compute parameters

- Move calibration file to a safe location

Which calibration target you use is a matter of personal preference. Chessboard patterns tend to produce slightly more accurate results.

Selecting Calibration Target

- Different types of supported planar calibration grids

| Name | Multi-Camera | Blur | Border | Fisheye |

| Chessboard | Yes | Yes | Yes | |

| Square Grid | Yes | Yes | ||

| Circle Hexagonal Grid | Yes | |||

| Circle Regular Grid | Yes | |||

| ECoCheck | Yes | Yes | Yes | Yes |

- Multi-Camera indicates the pattern is appropriate to use for multi-camera calibration as the target can be uniquely identified even when it's partially visible.

- Border means the landmarks can be detected right up the image border.

- Blur means its resilient to blur. If not resilient then the accuracy will degrade linearly to the blur magnitude. This is particularly important with high resolution images which almost always have blur.

- fisheye means it can provide accurate landmark locations even under fisheye distortion.

For multi-camera calibration, ECoCheck is the recommended target type since its a self-identifying pattern and due to the encoded binary patterns you can identify uniquely each landmark even when obstructed. For single camera calibration then either chessboard of ECoCheck can be used as they have symmetry counter acts shrinking caused by blur. The other target types will degrade in accuracy when there is any blur or under lens distortion. Unless you are very careful there will almost always be some blur from being out of focus or motion. This issue has been exaggerated as the resolutions of cameras has increased.

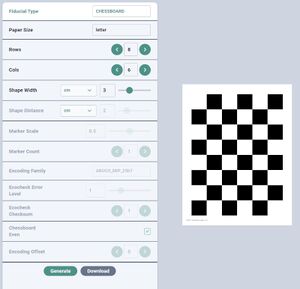

Obtaining Targets PDFs

The first step to creating a calibration target is downloading or creating your own PDF then printing it. After printing you will need to mount the printed document on a rigid board, discussed in the next section

- Create targets in your browser and save as PDF. (link)

- For a list of readily printable calibration targets see the Calibration Targets page.

- The Calibration Targets page also provides instructions on using a BoofCV application to generate your own custom targets.

- Instructions for how to create your own arbitrary calibration target for any sized paper can also be found at the Calibration Targets page.

Screenshot of Calibration Target Website

Mounting and Testing

After printing, the target needs to be mounted on a rigid flat surface. Any warping will decrease calibration accuracy. An ideal surface will be rigid and smooth. Thick foam poster board is easily obtainable and works well. I've also used clipboards with some minor modifications. Cardboard is OK if high precision isn't required well. For a well made target and a decent camera reprojection error is typically around 0.1 pixels.

You should now do a quick test to make sure you did everything correctly and that the software can detect your new target. If you have never done camera calibration before this is also a good way to learn what you should not do when taking your photos for calibration. If you have a webcam installed on your compute this test is easy. To do the quick test simply launch the assisted camera calibration app, configure it for your target type, and see if detects the target.

Detection Trouble Shooting:

- Is your hand touching the calibration pattern? Move your hand.

- Try to ensure the lighting is diffuse, uniform, and avoid glare.

- Does the target your printed look like the target in the preview? If not adjust the settings until it does.

- Harsh shadows can sometimes cause problems.

- Some target types require the entire target to be inside the camera's FOV

Quick comment about target row/column specifications. How rows and columns in a target is counted isn't universally agreed upon. In BoofCV we always count the squares or circles. To help you the PDF generator will the target's name and characteristics in the lower left hand corner.

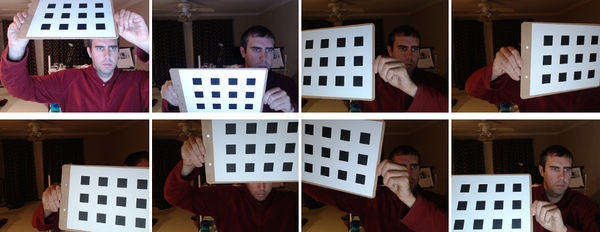

Calibration Target Placement

When collecting calibration images it is best to take a diverse set of in focus image which cover the entire image, especially the image border. An example of how one can do this is down in the figure above. One problem when calibrating a camera is that the residual error can't be trusted as a way to verify correctness. For example, if all the pictures are taken in one region the results will be biased, even if the residual error is low. Also avoid extreme angles or changes distance should be avoided.

A good way to check to see if calibration was done correctly is to see if straight edges are straight. In an undistorted image try moving a ruler to the image border and see if its warped. For stereo images you can see if rectification is correct by clicking on an easily recognizable feature and seeing if it is at the same y-coordinate in the other image.

Supported Camera Models

Camera models are provided for narrow (normal cameras, < 180 degrees FOV), and wide (no limit on FOV, a.k.a fisheye). Narrow camera models take in points that are in pixels or normalized image coordinates . Normalized image coordinates represent a 3D pointing vector with the implicit assumption that , which is what limits the FOV. Wide camera models use spherical coordinates instead since they do not make the assumption that visible points always appear in front of the camera.

If you use the calibration application you can decide if you want to save the found parameters in BoofCV and OpenCV formats. OpenCV is only provided for Brown as the other camera models are not identical between the two libraries.

Pinhole Model

This is the most basic camera model and does not model lens distortion.

where the 3x3 is the intrinsic camera matrix and is also known as .

Brown Model

The Brown camera model [1] is the most popular and models lens distortion radial and tangential ("decentering") distortion. It is appropriate for most lenses with a FOV less than 180 degrees.

where , are radial distortion coefficients, and are the tangential coefficients.

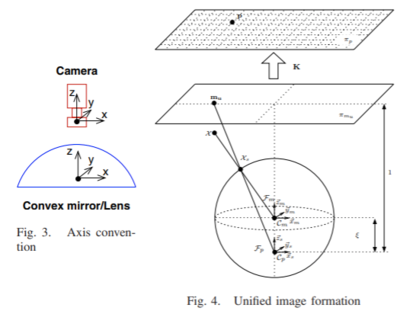

Universal Omni Model

Universal Omni [2] camera model adds a mirror offset to the Brown model and can model parabola, hyperbola, ellipse, and plane mirror equations. The only difference from the Brown camera model is how it converts spherical coordinates into normalized image coordinates:

After this step it has identical equations to Brown.

Kannala-Brandt Model

Kannala-Brandt [3] is a wide camera model that supports perspective, stereographic, equidistance, equisolid angle, and orthogonal projection models. BoofCV's implementation is a bit unusual in that it supports the full camera model as presented in [3], with symmetric and asymmetric distortion terms. Most libraries just support the Radially symmetric.

Radially Symmetric Model:

Radial Distortion Model:

Tangential Distortion Model:

Full Camera Model:

where are the distorted normalized image coordinates, is a unit vector in radial direction, and is a unit vector in tangential direction.

Appendix

- [1] Brown, D. C. "Decentering distortion of lenses, photometric engineering." (1966): 444-462.

- [2] Christopher Mei, and Patrick Rives. "Single view point omnidirectional camera calibration from planar grids." ICRA 2007.

- [3] Kannala, J., and Brandt, S. S. "A generic camera model and calibration method for conventional, wide-angle, and fish-eye lenses" 2006

Calibration Applications

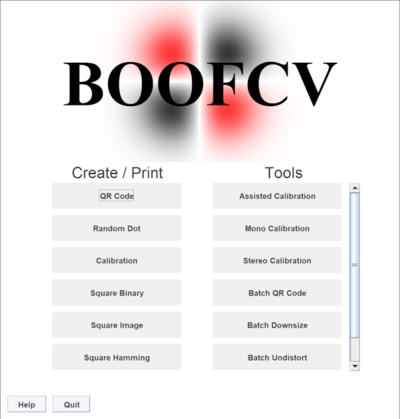

BoofCV comes with applications for camera calibrations which can be used as either commandline tools or GUI applications. The easiest way to use them is to launch the Applications jar then click on the calibration tool you wish to use. The commandline interface provide access to all the options and allow you to select images using Glob or Regex commands. For those who wish to use BoofCV to detect calibration landmarks but want another tool to do the actual calibration, you can save the landmarks detected for each image to a file.

To launch the tools from the command line you can use the following about building/downloading "applications.jar".

java -jar applications.jar CameraCalibrationMono

java -jar applications.jar CameraCalibrationStereo

Links

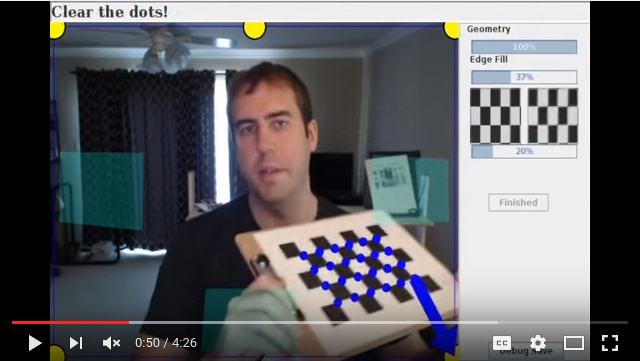

Webcam Assisted

This live webcam calibration application is interactive and designed to ensure that your image set will be in focus, collected around the image border, and have sufficient geometric diversity. This is accomplished by; 1) guiding you to specific locations inside the image, 2) mathematically examining the current solution to see if it has sufficient geometric diversity and will converge, and 3) collecting multiple images at each location but only using the one which is the most in focus. Once you are done capturing images you're then presented with another view where you can view the results for individual images and across the whole dataset.

All data is saved disk along with the found intrinsic camera parameters. Please view the video above before proceeding.

Usage Examples:

java -jar applications.jar CameraCalibrationMono --Camera=0 --Resolution=640:480 CHESSBOARD --Grid=7:5

The arguments specify which camera to use, it's resolution, type of calibration target, and the calibration target's shape. Results will be stored in the "calibration_data" directory. You can also use the --GUI flag and graphically select a camera and configure the calibration target.

Custom Video Sources

The assisted calibration by default uses video feed from Webcam Capture. With a little bit of coding it's easy to add video sources from really anything as long as you can get a BufferedImage. Take a look at CameraCalibration.

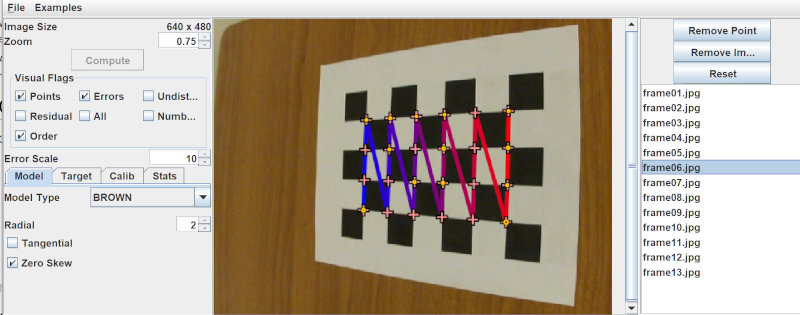

Monocular Calibration

BoofCV provides both command line tools and a GUI application for calibrating different types of cameras. A screenshot is shown below of the application. The application is designed to help you visually verify results quickly and accurately while also providing statistical results. Camera model parameters and target type can both be easily changed in the GUI. Bad features and images can also be removed so that they won't corrupt your results. The latest instruction for the command line tool can be found by typing "--Help" as a flag.

If you would like to use a pre-built application it can be downloaded from here:

That will print out instructions. There are two methods of input with that application. Images from a directory or live video feed from a webcam.

Trouble Shooting

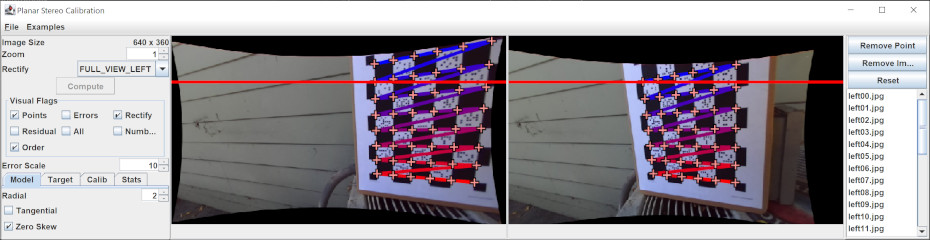

Stereo Calibration

Command Line Interface

The same applications can be used to calibrate from a set of previously collected images saved into a directory. By default a GUI visualizing the results is displayed, but this can be turned off.

java -jar applications.jar CameraCalibration --Directory=images/ CHESSBOARD --Grid=7:5

Results will be stored in the "calibration_data" directory. This includes found calibration parameters along with the collected images. If using the command line isn't your thing then use the --GUI flag and select the input directory.

Calibration from Source Code

Coding up your own software to load and process calibration images is also easy, but more tedious. The calibration application also doesn't support stereo cameras yet.

Example Code:

Batch Undistortion Tools

Removing Lens Distortion from Images

Most computer vision algorithms assume a pin hole camera model. Undistorting an image allows you to treat the image like a pin-hole camera and can make it visually more appearing since the borders are no longer heavily distorted. This operation can be relatively expensive. Internally most algorithm in BoofCV detect features in the distorted image then undistort individual features

Example Code:

Stereo Rectification

Stereo rectification is the process of distorting two images such that both their epipoles are at infinity, typically along the x-axis. When this happens the epipolar lines are all parallel to each other simplifying the problem of finding feature correspondences to searching along the image axis. Many stereo algorithms require images to be rectified first.

Rectification can be done on calibrated or uncalibrated images. Calibration in this case refers to the stereo baseline (extrinsic parameters between two cameras) to be known. Although in practice it is often required that lens distortion be removed from the images even in the "uncalibrated" case.

The uncalibrated case can be done using automatically detected and associated features, however it is much tricker to get right than the calibrated case. Any small association error will cause a large error in rectification. Even if a state of the art and robust feature is used (e.g. SURF) and matches are pruned using the epipolar constraint, this alone will not be enough. Additional knowledge of the scene needs to be taken in account.

OpenCV Friendly

BoofCV can read and write YAML camera calibration files in OpenCV's format. You can calibrate your camera in BoofCV using the assisted calibration app and then use its results in OpenCV!

CameraPinholeRadial param = CalibrationIO.loadOpenCV("boofcv_intrinsic.yaml"));

UtilOpenCV.saveOpenCV(param, "opencv_intrinsic.yaml");

where "boofcv_intrinsic.yaml" is file containing intrinsic camera parameters in BoofCV format and "opencv_intrinsic.yaml" is a file in OpenCV format.

Other Camera Calibration Tools

Here's a list of other tools for camera calibration, in alphabetical order. If you know of other tools that should be listed here post the suggestion on the message board.

![{\displaystyle \left[{\begin{array}{c}x\\y\\1\end{array}}\right]=\left[{\begin{array}{ccc}f_{x}&skew&cx\\0&f_{y}&c_{y}\\0&0&1\end{array}}\right]\left[{\begin{array}{c}x_{n}\\y_{n}\\1\end{array}}\right]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/3bc5dc871558b89eea0837dc5933204a85ebb83b)

![{\displaystyle \left[{\begin{array}{c}x\\y\\1\end{array}}\right]\sim K\left[{\begin{array}{c}x_{d}\\y_{d}\\1\end{array}}\right]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/adeb69a57d7b382aa9793d8e1da5387a69e1f6c5)

![{\displaystyle \left[{\begin{array}{c}x_{d}\\y_{d}\end{array}}\right]=\sum _{i=0}^{i<rad}a_{i}r^{2i}\left[{\begin{array}{c}x_{n}\\y_{n}\end{array}}\right]+\left[{\begin{array}{c}2t_{1}x_{n}y_{n}+t_{2}(r^{2}+2x_{n}^{2})\\t_{1}(r^{2}+2y_{n}^{2})+2t_{2}x_{n}y_{n}\end{array}}\right]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/58a17e44fc8f76e787676c885aea55a75294c219)

![{\displaystyle \left[{\begin{array}{c}x_{n}\\y_{n}\end{array}}\right]=\left[{\begin{array}{c}x_{s}/(z_{s}+\epsilon )\\y_{s}/(z_{s}+\epsilon )\end{array}}\right]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/27945b9e8443352730a8c97a9c951e917be0c7f9)

![{\displaystyle \left[{\begin{array}{c}x_{n}\\y_{n}\end{array}}\right]=r(\theta )\left[{\begin{array}{c}\cos \phi \\\sin \phi \end{array}}\right]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/ee5d8ddca2cefc6db2865fb035c1ed238b0aee9c)